Part of the Performance and Scaling series.

Execution Caching is a great way to reduce excessive database calls. For frequently accessed reports, you can set up caching so that the data is queried on a schedule, and users will access the cached data in a much more performant manner than if they had to query the database. This fits nicely into the "write once, reuse many times" philosophy of software practice.

But how can you know which reports are accessed the most frequently? Educated guesswork is not an ideal solution, and surveying users can be slow and subject to sample size inaccuracies. Thankfully, we can do better. Version 2017.1 and later of Exago BI come with a built-in Monitoring service that can be configured to automatically track the frequency and timing of report executions.

The monitoring data is stored in tables and can be accessed and reported on in Exago BI itself.

Reporting off of Monitoring Data

Once you have configured monitoring and imported the database into Exago BI, you can start to build reports. So what kind of report should we make?

Here's an example: Let's find out the frequency of report executions for each report, and for different times of day. Our most frequently used reports can be cached to reduce database strain, and we can use the timing data to determine the best times to refresh the reports.

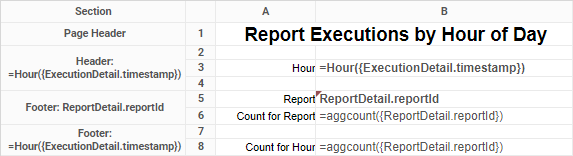

This report might look like the following:

An example of a Monitoring Report

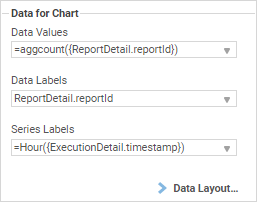

And we can add a chart as well -- let's say a row-based Stacked Column chart:

Execution frequency per report per hour

Now we can identify which reports would benefit most from execution caching, and what times they should refresh their data.

This is just one example of how you can use monitoring to analyze your reporting environment and make optimizations. What more can you come up with? Let us know by adding a comment to our blog!